Howdy, folks—my name is Lee, and I’m the SCW server admin. I don’t post often (or really ever!), but with Eric and Matt off for the day to recover from their marathon forecasting job, I wanted to take the opportunity to talk to y’all a bit about how Space City Weather works, and how the site deals with the deluge of traffic that we get during significant weather events. This isn’t a forecasting type of post—I’m just an old angry IT guy, and I leave weather to the experts!—but a ton of folks have asked about the topic in feedback and in comments, so if you’re curious about what makes SCW tick, this post is for you.

On the other hand, if the idea of reading a post on servers sounds boring, then fear not—SCW will be back to regular forecasts on Monday!

(I’m going to keep this high-level and accessible, so if there are any hard-core geeks reading here who are jonesing for a deep-dive on how SCW is hosted, please see my Ars Technica article on the subject from a couple of years ago. The SCW hosting setup is still more or less identical to what it was when I wrote that piece just after Hurricane Harvey.)

How much traffic just blew through?

So, fun stuff first. On a normal day, Space City Weather does something like 10,000-20,000 page views to something like 5,000-10,000 visitors. This traffic is generated from folks who specifically visit spacecityweather.com, so those numbers don’t include people who just read the SCW e-mail or just peep at posts on the SCW Facebook page. (“Page views” are a good metric for how much work your server has to do, with each “page view” representing one page of content that the SCW server has to send to you—a page like this article that you’re reading right now, for example. “Visitors,” on the other hand, is the absolute number of people who stopped by. Some visitors look at more than one article, so you’re typically going to have more page views than visitors.)

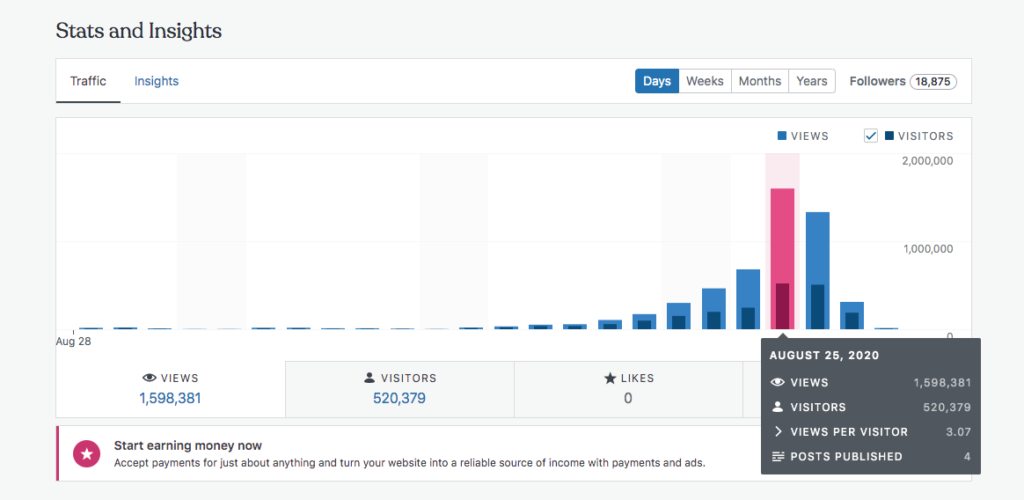

Our peak traffic day with Hurricane Laura was Tuesday, August 25, when things looked like they might go very badly for Houston. On that day, we clocked a smidge under 1.6 million views to about 520,000 visitors:

That’s about 160x our usual daily traffic load, and even higher than the 1.1 million views we hit during the peak of Hurricane Harvey two years ago. And, unlike Harvey’s traffic, we ended up with not one but a pair of million-view days—August 25’s 1.6 million views were followed with another 1.3 million on August 26.

To put that load in terms of pages-per-second, SCW was serving up between 20-50 pages every second for hours at a time on both Tuesday and Wednesday. And the site did it without breaking a sweat.

How? Well, there’s the trick—we cheat a bit.

The stack

But first, before I explain how we cheat, I’ll talk just for a moment about the hardware and software that powers the site. Space City Weather is hosted on a dedicated physical server, rather than on any kind of shared hosting. That means we lease an actual physical computer from a hosting company named Liquid Web; the server lives up in Liquid Web’s Michigan datacenter. There’s a very good reason we don’t host the server in a Houston datacenter, even though there are many fine options—having your servers in the same place as the disaster you’re reporting on is not a strategy conducive to uptime, as we say in the biz.

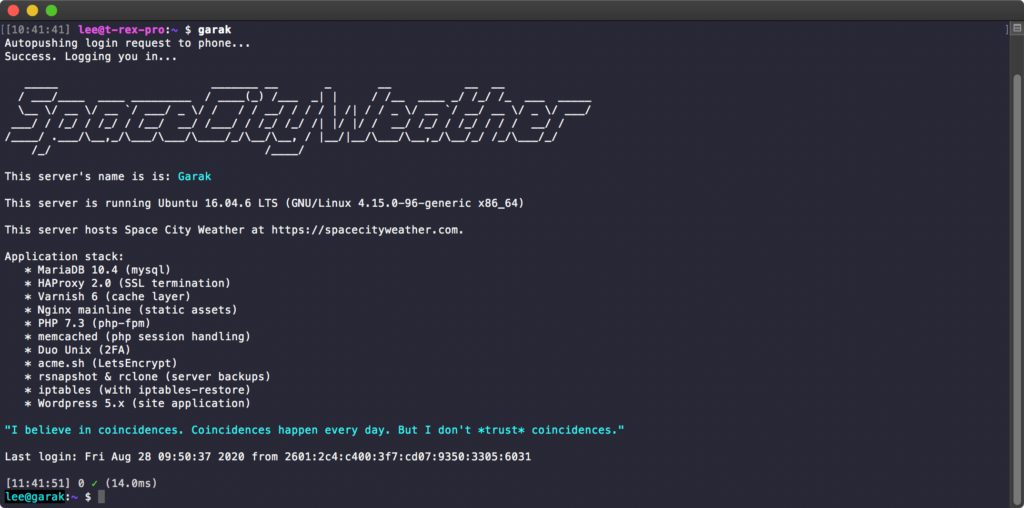

As Eric noted on Twitter the other day, SCW’s web server is named “Garak,” after the Cardassian tinker/tailor/soldier/spy from Star Trek: Deep Space Nine. Garak is an 8-core Xeon with 16GB of RAM and fast dual solid state disks, and he’s a workhorse.

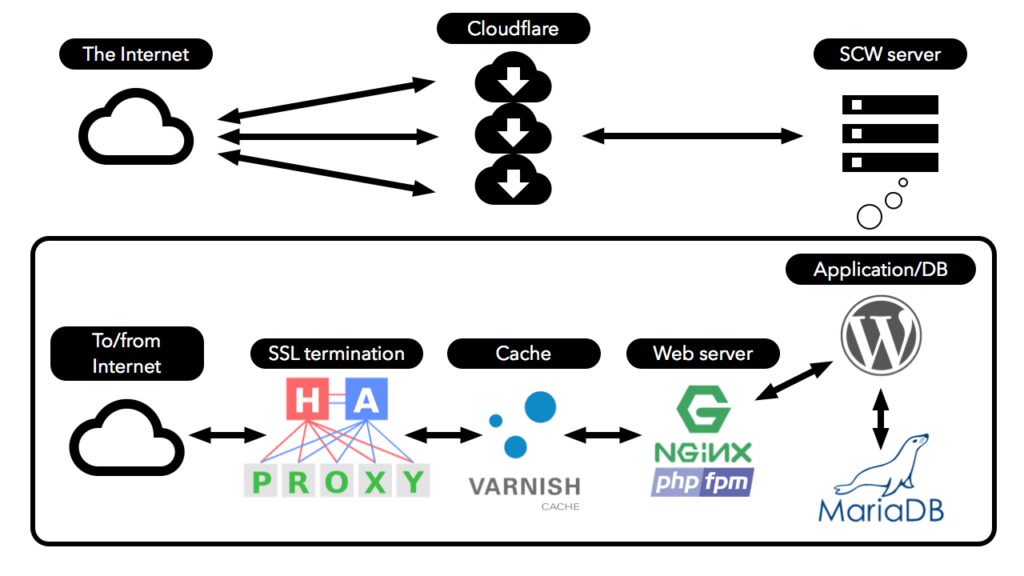

Garak runs the application stack that makes Space City Weather work. That stack includes WordPress, the actual blog application SCW runs—you’re reading a WordPress-generated page right now, and I’m writing this post in WordPress. Garak also runs Nginx (pronounced “engine-ex”), the actual web server application that sends you pages and pictures. There’s also a database to hold WordPress posts and configuration data, a heavy duty caching layer (using Varnish), and an SSL termination layer (using HAProxy) for keeping things secure while still taking advantage of cache.

Why do we use physical hosting (which is admittedly more expensive) rather than cloud-based hosting at AWS or another cloud provider? The answer is simple: because physical hosting is easy. I don’t have to know or care how to have multiple cloud servers of varying capacities standing by to come online in the event of a traffic storm—we just always have the big gun (that is, the physical server) in place. Liquid Web’s rates are reasonable and it’s a cost-effective solution that trades time for money, and I’m always going to spend money to gain time if that’s an option.

The cheat

But here’s the thing—like I said, we kind of cheat at big traffic events a bit, and the way we cheat is by using Cloudflare.

In the simplest terms, Cloudflare is a service that absorbs your network load for you. Space City Weather is the kind of site that lends itself very well to caching—that is, most of Space City Weather’s pages don’t change very often, so you can take advantage of that and distribute copies of those un-changing pages out to Cloudflare’s army of servers. When the traffic storm arrives, Cloudflare’s monster servers send the unchanging parts of the site to visitors, and Garak only has to be bothered when something does change—when Eric or Matt create or update a post, for example.

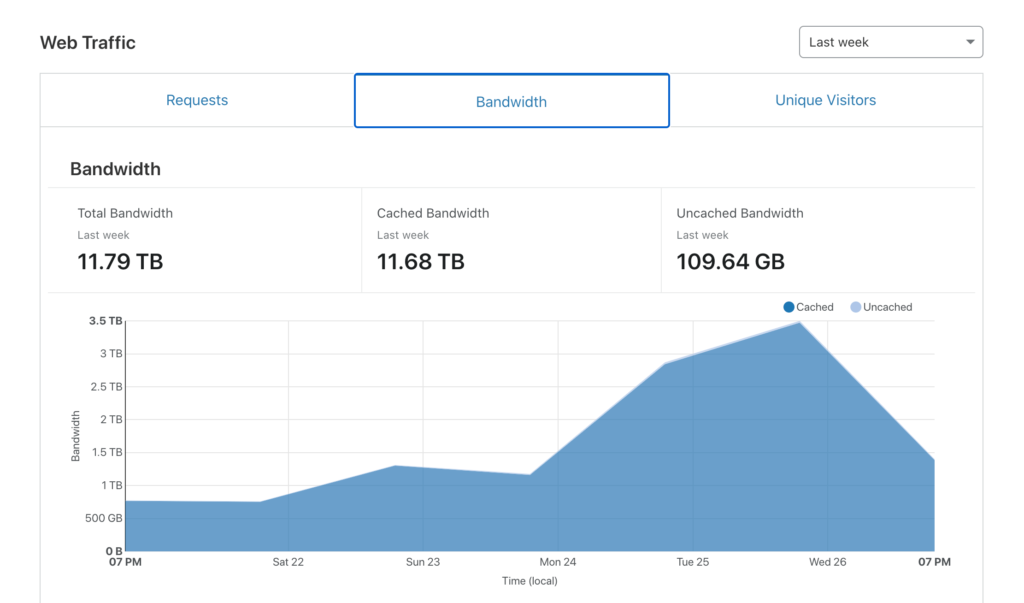

Spreading the load out to a paid service like Cloudflare is shockingly effective. How effective? This chart from Space City Weather’s Cloudflare dashboard tells the tale in a single picture:

Over the last week, Space City Weather served a bit under 12 terabytes of data to visitors. That is a stupidly ridiculously large amount of traffic. But out of that monster chunk of data, Garak only had to serve up about 110 gigabytes. A terabyte is 1000 gigabytes (or 1024 gigabytes, depending on what you’re measuring and who you’re talking to, but I’m trying to keep things simple), so Cloudflare handled about 99% of the Hurricane Laura traffic. This left Garak with only about 1% of the total work.

Considering that Garak’s monthly bandwidth allocation from Liquid Web is only 5 terabytes and every gigabyte over that comes with a penalty fee, we’d be looking at expensive overage charges without Cloudflare in place to handle the load. (And that’s not even addressing the fact that we’d probably need to spread things out between more than one physical server!)

Cloudflare more or less makes Space City Weather possible.

Funding, and the future

Fortunately, Cloudflare is also very kind to us on pricing. Because our monster traffic events are so rare and so peak-y (as opposed to constant and sustained), we get by on Cloudflare’s lowest-cost paid plan. That, along with all of our hosting and infrastructure costs, are handled via Reliant’s ongoing yearly sponsorship of Space City Weather. This enables us to keep the site very lean and focused on speed above all else, without intrusive ads, autoplaying garbage video, pop-ups, or any of that other annoying crap other sites have to festoon themselves with to earn money.

We’re nothing without our audience, and SCW readers are the best. As a life-long Houstonian born and raised, it brings me a lot of pride to see SCW thriving; this site is definitely a home-grown labor of love. And we hear your requests to help out, too—for new readers who perhaps just found SCW because of Hurricane Laura, we do have a fundraiser where folks can purchase SCW t-shirts, umbrellas, and a few other items. If you’re looking to score some sweet SCW merch, fundraiser time is the time to do it—that will be in November.

Thanks for taking the time to read this technical update, if you’ve made it this far, and thanks for stopping by the site. Eric and Matt will be back to forecasting on Monday, and we hope you’ll stick around!

I was always curious how SCW handled so much traffic. That was an interesting read. Thanks for the info Lee!

Thanks for the detailed explanation Lee. As a not that advanced almost a techie I found it fascinating. Plus I too wanted to help out and donate so thanks for the info on fundraising. I love that Space City Weather operates without annoying ads and pop-ups.

That was a well-written, informative, interesting write-up, accessible by this non-geek. Very well done, Lee, and greatly appreciated!

Thank you 🙂

Throughly enjoyed your article!

Thank you for all that you do. I tell people about your page by saying “They aren’t the ‘It’s The Storm Of The Century – we’re all gonna DIE!’ type of weatherguessers like on the local news stations.” God bless you for your devotion and long hours this hurricane season!

Except when it WAS the storm of the century and people were dying.

Knowing when the “wolf” is really coming to your door is important.

Thank you! Interesting read 😊

You sure handed over allot of confidentiality within this post. As a cyber security engineer, I’d say this is something that should be taken down or re typed.

Lol confidentiality like what dude? u think someone is sitting on a 0day for nginx and their going to attack now?

Great insight! Thank you to all of you. I look forward to giving a little support back in November.

I can always appreciate the tech behind the content and to have a reliable site running when stressing with what the weather is going to do is appreciated. Appreciate the engine that keeps the SCW going.

I love everything about this post. Geeky in the very best way. Thank you!

Hi, my name is Kevin, and I’m a weather nerd too.

Sry good and reliable weather site. You are my go to for weather details and forecasts.

Thank you so much for the informative look at the back end of SCW.

Fantastic work you all doing. To trust information during bad times is paramount

Your explanation of your system is very interesting!

Thanks

Kudos to all behind the scene SCW support crew!!

You guys are awesome and the voice of reason when things get crazy weather wise. I rely on your weather not just during hurricane season but year round. Keep up the great work! It’d be nice if we could pick up some gear outside of the fundraiser, however I get it that you aren’t in the business of selling t-shirts

Thank you everyone at SCW!

Very interesting. Thanks

Live long and prosper!

Y’all are awesome! Thanks for the background info. Reading this and the weather posts feels like I’m talking to a friend across the table….

Thanks for your work, Lee, and thanks for explaining it! Appreciate the Space City Weather team so much.

An excellent presentation and thank you for taking the time after a busy to answer questions I have often wondered about. Great work and looking forward to contributing in November.

Well of course there would be another writer behind the scenes able to create ridiculously interesting content! Never would I thought reading about servers and caching layers would keep my interest… you guys continue to surprise me! Can’t wait for the swag to drop!

Thanks very much to you (and to everyone else!!) for the kind words. For my day job, I work with Eric over at Ars Technica, and I’ve been lucky enough to be able to collaborate with him on some amazing stuff, including this dual-byline piece on Apollo 13. (I’m a very amateur space historian, and Apollo is totally my thing.)

Though the piece I’m most proud of is this discussion of the potential rescue plans for Space Shuttle Columbia. I was working in aerospace during the Columbia disaster, and it was very personally meaningful to be able to write about the event.

Incredibly interesting article about the Space Shuttle Colombia. It’s always hindsight and those government investigations (I work for DoD) that really highlight all the things that could have been done. It was a fascinating read.

Thank you so much for sharing! Read this evening and both were fantastic pieces! Here’s to more geeking out (and learning) in 2020…!

I’ve always been curious how you guys handle web traffic especially since like you said, it can be so “peak-y”. The work that you guys do is absolutely invaluable for Houston and Southeast Texas. I always thought that if there was a SCW Patreon or some other type of subscription that I would sign up in a heart beat. I hope that the extensive work that is done here is worth it to you all! It certainly is worth it for us.

Like all sysadmins, I am fundamentally lazy, and laziness is my core motivation. It’s all about building an infrastructure that I don’t have to care (much) about 😀

Appreciate the entire team who supports Space City Weather! You guys rock!

Thank you, Lee! I really enjoyed learning about the “ghost in the machine” that makes Space City Weather work.

You, Eric, and Matt need to know just how much we all lean on you for the best weather reporting available! Your timely and informative posts about the science that drives weather patterns helps us to understand what’s happening without falling victims to an undercurrent of sensationalism.

In short, you keep us all grounded and prepared to deal with what comes our way. Thank you, thank you, thank you!

My love for Space City Weather has grown even larger with the behind the curtain peek, and the prominent DS9 reference.

To paraphrase the great Elim Garak, “my dear SCW, perhaps there is hope for you yet.”

While I understand close to zero about the technical details, you explained things in such a way that I do now have a broad brush grasp of how things work in general and it’s amazing! Thanks for taking the time. SCW is one of my best finds of 2020!!

Thank you for the kind words 🙂 I appreciate the praise—writing is my day job, and I try to do it well. The server admin stuff is just a hobby 🙂

I love your forecasts and the tone of your posts always. SCW was what kept me sane during Harvey, and you provided much needed clarity as Laura was maybe or not heading toward us. Thank you!

—A fellow Trek Nerd

The entire team does a fantastic job. Houston is lucky to have you all! Appreciate Reliant for its sponsorship!

Love this post in addition to the Wx stuff thank you (also a fellow Trekkie)!!

Thanks for the interesting information and for making SCW possible. I love reading the information without the dramatics!

Thank you for the interesting information. I wondered how it all worked during these events. Love Space City Houston!

Very informative look under the hood of the site. Thank you, Lee, for your support and dedication to SCW. You noted that many people rely on the site to make life-or-death decisions in times of severe weather and you are dedicated to ensuring the site is up so people can get the information they need to make those decisions. Your dedication to Eric and Matt’s mission is admirable. Thank you for your hard work in supporting these guys, their site, and their mission.

Thank you for the kind words. Truth: logging into the box and keeping track of the server during major events is actually one of the ways I distract myself from the weather—I’m just down here in League City, so I’m going through everything with you guys. Actually having something to do, even if it’s just making sure the server stays up, is a great distraction to have. It helped me get through Harvey and it helped a lot with the run-up to Laura!

Great work! Very much appreciated having a weather site we can depend on without the hype of the local news station guys. Keep up the good work.

Thank you Lee for the look behind the wizard’s curtain. I definitely know what I am buying my friends and family for Christmas! T-shirts or umbrellas for all!!

Thank you and keep up the amazing work you do for our community.

Lee…not surprised that Eric and Matt are tied to another person who really cares about what you do…strength in numbers and the THREE of you are providing one heck of a comforting service at a time greatly needed! I’m an ole guy that is extremely IT challenged…however, I can still receive/send Morse Code and on a really good day at 13 words per minute…

Milt, I’ll want to buttonhole you at some point for some space stories, once we get done with this COVID insanity. I’ll bring the beer if you bring the tales.

Lee…I got stories, some I can probably share now…I never turn down cold, craft beer…

In any endeavor, the best make everything appear to be so simple, smooth, and routine. That’s what you guys do and one only has to look anywhere else to see how consistently remarkable and valuable your work is compared to all of the “others” and how you make a complicated technical profession interesting, informative, and understandable. Thank you Space City Weather!

Thanks for the information. It’s always interesting to see how a website works. Keep up the good work.

Thanks Lee. Interesting read.

Keep up the good work.

I was hoping to purchase some stuff but I guess I’ll have to wait till November.

Enjoyed the technical (IT) side of how SCW works. I am new to the site. Moving to Galveston from out of state. Our realtor recommended it. I was grateful to have the updates before, during & after Marco & Laura. Thank you for what you do! Look forward to all of the future reports.

Thank you…a most informative read!!!

Lee—not a computer geek, but am absolutely grateful for your behind-the-scenes contributions to making Space City Weather the go-to Houston Weather resource for me and so many others! 🙏🏼

Thanks Lee for your behind the scene work😀

Now I feel guilty about staying on the site all night! Great look behind the scenes. Thank you for making it an interesting read that I could follow! Looking forward to the fundraiser…

Definitely don’t feel guilty! SCW is like Doritos—have all the pageviews you want, we’ll make more 🙂

I very badly want to know who named it Garak…

Oh, it was completely Eric’s idea. He is a much, much bigger nerd than you might think. (Like, “plays collectable card games” level of nerdiness! 😀 )

Thanks for sharing the details of what it takes to post a report. I’m new to SCW, but have already referred several friends to your site. I feel much better informed than simply watching the weather people on the news move from map to map to map, and I’m usually lost after the first one and can’t remember for 5 min what was said. Keep up the good work! We love you for keeping us informed and safe.

Please get a website just like this for the DFW area. Today’s forecasters accuracy is abysmal…..most of the time. (I’m a Reliant Energy customer!)

Thanks for all you guys do.!!

Don’t put off that update to 18.04 too long!

We’ll be switching onto a new dedicated box with 20.04 pretty soon—just waiting until after the season!

Thanks for sharing, that was an interesting read!

This is why I keep Reliant as my service provider, they sponsor this site which is my daily go-to for weather.

Thanks for a great explanation!

I’d donate to a tip jar, even outside of fundraising season. Maybe this conflicts with the sponsorship model, but dammit take my money! 🙂

Thank you Lee, Eric And Matt for being there.

Thanks! I’m also an old angry IT guy, so this was fascinating. It made me a little less angry, if not any less old.

Not angry at SCW, though. It’s the best thing out there.

Awesome article. Thanks for the kitchen tour!

Thanks for the info! It’s fascinating! I love SCW. It’s my go to weather source that I have trusted for many years!! I love my SCW t-shirt and wear it proudly!! Keep up the great work!

Thanks for the interesting info

Lee – much like Eric and Matt – you kick ass. Please continue to do so.

Where to start….How about with Thanks for the teamwork!

Reading this post and the comments (62 by the time I got to this), makes me feel better connected to everyone. Being a Trekkie, having a better than average IT understanding, playing cards too (shhh, I won’t tell the secret), my fascination with weather….well, for obvious reasons, I love this site and my jaw dropped upon seeing the figures.

Especially knowing that I leave it open and cached and merely refresh to read updates. I did not know about Space City Weather during Harvey, but wish I had and I send the link to everyone I know now.

Learning about the systems aspect with your post is way cool and I appreciate everything the team does to ensure complete, accurate and timely information to the masses.

I’m in agreement with another poster….what if we want to donate outside the annual fundraiser? I know the company you use requires a fee when purchases are made or even when cash is donated, which I don’t like….I want 100% of my money to go to Space City Weather and their operations.

As a Tax professional, I am always thinking about this from that perspective.

So, in conclusion, all I can offer is this: should any of you ever need free tax advice..(I am IRS certified)….feel free to reach out….it’s how I give back to the community.

Thank you, Lee, for an interesting peek behind the scenes, and for simplifying the process for those of us who may be less talented in that direction. 1.6 million views on the 26th is astonishing! On the other hand, there was a certain Balance of Terror in the atmosphere, when 520,000 of us were wondering if Houston was fated to become a City on the Edge of Forever…. 😉 Awfully glad to have you and Eric and Matt around, fair weather or foul!

Thanks! This is very interesting!

That was a great post! Kudos to all the team to Reliant for sponsoring SCW! Like others posting here, I too want some merch before the official fundraiser. Thanks so mych to the SCW team for all your hard work. Your efforts are much appreciated. 😘

For those who have not bought or seen the SCW t-shirts, they are heavy duty with well done graphics. Thankfully each year’s offerings brings new colors so I don’t get the question “didn’t you wear that shirt yesterday?”.

I did not understand much of what you wrote, but I got the point! You are my new favorite on the team! Sincerest appreciation to you guys for all you do.

As a geeky woman this was a great read!!! Thank you for all your hard work too!!!

Well, when I saw y’all mentioned in the category 6 discus section as someone to follow for accurate unhyoed info I though … there goes access to my favorite weather blog… and clearly your volume increased. Thanks for all y’all

do…

Very interesting. Before I retired 3 years ago I was dealing indirectly for some similar items for our software download pages. We were hosting using IBM and using cache not so much for load leveling but for providing reasonable response times around the world. I cannot believe it but I don’t remember the name of the caching service we used.

Very interesting. Thank you. Love you guys!!

Thanks for the Tech talk Lee, appreciate all you guys do at SCW.

I love how informed I feel after reading a SCW post. After reading Eric’s, Matt’s and now Lee’s words, I have been given an educational peek into a world that I had zero knowledge of prior. Additionally, I have been humbly shown why there are “experts” who are paid to do these jobs and I am reminded that although any one of these geniuses may not be perfect, I am far from being able to do even 1/16th of their job. Thank you for the lessons full circle.

Shut up and take my money! I can’t wait til November 🙂

Bring on the fundraiser! I love you guys!!

DS9 references? This site is even cooler than I imagined. I’ve shared the link far and wide, because the fact that you guys report on not just the hurricanes that threaten TX means that we can help you inform a lot of people in a storm’s path through word of mouth (or keyboard). Good work on building the system to handle a sudden load like that; we’d freak out if SCW went down as a storm was approaching.

Marking my calendar for some Nov shopping.

Thanks Lee for explaining your use of cloud-flare and for keeping this site loading so fast! At times such as these weather events it’s very nice to be able to load space-city cleanly and quickly.

Lee – fabulous write-up. I really enjoy the casual style of writing – it’s all about communication!! Smart work and we do appreciate Reliant’s part in this blog.

Eric and Matt – Apologies. I was hiding my knowledge of this blog because I sounded brilliant to my family, friends, colleagues, contractors, etc. Blast it, you guys have become too popular so I gave up and now recommend it to everybody. Secret is out – you do a fabulous job AND enjoy you on NPR as well.

We’re just glad it works. We depend on you, even though we’re in Beaumont.

Loved the tech note. Love you guys. Why don[‘t you open your online shop NOW, so we can buy stuff and support you?

I just figured out you created the Chronicles of George… I love that site. Spent many hours reading George’s hilarious tickets (having worked on a helpdesk myself).

Thanks Lee! And all y’all!! For being real !

Interesting summary, Lee, and I’m glad SCW weathered the traffic storm. But I ran the site through a few popular website speed tests, including Google PageSpeed, GTMetrics, and Pingdom. The results were not good. I’m no expert in WP speed optimization, but it seems like there’s more to be done. I don’t know how much the Jennifer theme is impacting things, but I’m a long time fan of GeneratePress as one of the lightest and fastest themes with amazing support and useful features. I have no association with them other than being a very satisfied user. I don’t build WP without GP. generatepress.com

Yeah, the site is as reasonably performant as I can make it be, but I’m not a developer by any stretch of the imagination, and the theme SCW is using is pretty old—I’ve treated it more or less like a black box, since there’s nothing I’d know how to do with it anyway.

But definitely thanks for the note about GeneratePress. I’ll take a look!

“without intrusive ads, autoplaying garbage video, pop-ups, or any of that other annoying crap other sites have to festoon themselves with to earn money.”

Outstanding!!! 😍

What fantastic details! Thank you, Lee!

That was awesome. Just the right amount of geek.

Fun Read…. granted, i’m a Linux Sys Admin… so i’m interested to see how others live.

From one IT geek to another, thanks for this post!!

Wonderful post!! I always love it when people can articulate exactly what their stack is and how it works – without resorting to mumbo-jumbo. Awesome server name too!

I am one of the early addicts of this site…..right from the time Eric came out from HoustonChronicle and started this…When was it ? May be 2008 ? Thank you all for everything you guys do,

Fascinating and funny. Garak was my favorite DS9 character. Thanks for doing it what you do and doing it so well!

A really interesting post! Thank you for explaining how the magic happens

Thank you for all you and the team do

It is invaluable when you have friends and family spread out between Louisiana and Texas coast.

Awesome write up! Thanks for being part of my favorite website.

Eric, Matt, Lee,

Impressive server setup. Very elegant design.

Thank you for hard work and really focus on your audiences. I can’t appreciate more that securing sponsorship with Reliant. It is a big deal since we only see one reputable sponsorship. You guys are the reason I stick with Reliant as my provider.

East Texas is Team Space City Weather! You guys help us out so much . I was able to trot along behind Lee’s informative explaination with only one or two brain overloads. Thank you, Lee! I hope “ my boys”, Matt and Eric are having a restful weekend!

Trudy – Longview Tx

Great work and thanks for sharing! As a Cloud Architect who uses this site daily… I loved this post but do worry about the single server architecture. I suggest you reconsider cloud given you can still use this architecture, save money, and can scale out while having multi-region DR if needed. Give Garak some friends to help!

I hear you, but IMO the value prop just isn’t there. (Famous last words, right? Hah!)

The current config is reasonably simple, as far as these things go—ssl termination, cache layer, web server, app + db layer. And I can run WordPress in single site mode, without any HA or cluster tricks. And the stack has been pretty extensively battle-tested, having been through Harvey and also now Laura (which did more than twice as much raw traffic as Harvey). With the site’s workload being so immensely cache-friendly, I’d be more worried that Cloudflare would kick us off the service due to demand spiking than I’d be worried about the actual origin server. The busiest process on the box during the traffic storm was HAProxy, doing all its connection magic. And even then, server load never got above about 0.2 – 0.3. The single physical box is, from a perspective of resourcing and workload, pretty grossly overprovisioned.

But I like that, since it gives effectively unlimited headroom for Eric and Matt to try stuff out and not have to bother with prod resource contention. And, like I said in the post, it’s just not that terribly expensive—we’re using Liquid Web’s smallest dedicated phy box, and you can look up the pricing if you like. Considering the CPU, RAM, IO, and bandwidth that gets me, along with dev/test headroom, and it seems like a more than reasonable price.

Transitioning to a demand-based setup with scaling would involve a lot of time, since I’d have to go teach myself how to do it right (though at that point I’d crib from my coworkers at Ars, since Ars moved onto AWS about a year ago). I’d also want to re-architect the whole stack at that point, heh.

We’re good for the foreseeable future staying on phy hosting, but in a few more years, who knows? The value prop might be there, depending on what the landscape looks like!

I discovered Space City Weather a few years ago and don’t remember exactly how and when, however I am a loyal reader because Eric and Matt “tell it like it is”. I love the straight facts with no hype. You guys “rock”!!! It’s great to receive information consistently and with honesty. Thank you Lee for the “behind the scenes” look and for what you do to keep.things running smoothly! Way to go Space City Weather!

Garak….just plain, simple Garak.